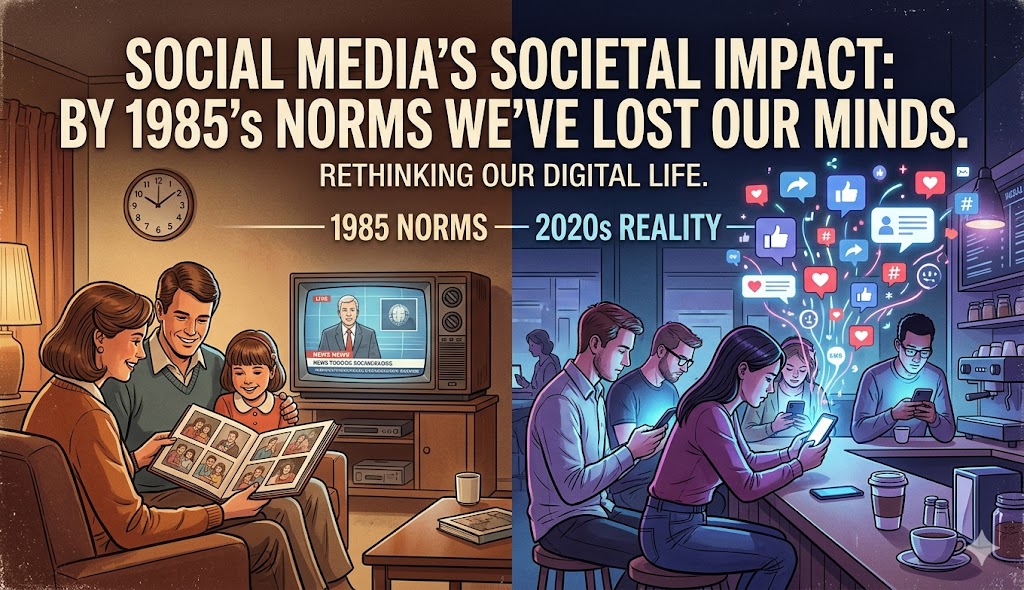

Naturally judgemental as we are, we’ve become obsessed with sniffing out the hand of AI in every article, social media post, and email we receive. Digital town squares have spiralled into a self-righteous, finger-pointing symphony of “gotcha moments,” with armchair critics yelling “AI wrote this!” from the virtual rafters, as if they’ve caught a sinner red-handed in the confessional.

I say: So what?

We don’t judge an architect by whether they used a pencil or CAD software; we judge the building. We don’t judge a soup by whether the carrots were diced with a five-hundred-dollar Japanese blade or a food processor from Walmart; we judge the soul of the broth. Likewise, we don’t judge a war correspondent’s photo by whether it was captured on a roll of film or a digital sensor, but by the raw, visceral tragedy it forces us to witness

Our current obsession with the AI vs. human dichotomy is a distraction from the fundamental issues: quality and intent. Currently, we’re lingering in the infancy of AI content, where output is just “off” enough to trigger a gag reflex. Much as the fork was once decried as a devilish excess when introduced to European tables, we view AI-generated work as a transgression against our romanticism for the “thumbprint of the maker.”

This disdain is a temporary posture. History suggests that today’s AI-slop will be accepted as tomorrow’s standard. Whenever you say “AI can’t do that,” be sure to add “not yet.”

Consider the transition from candlelight to the lightbulb. Initially, electric light was a harsh, flickering, and unstable glow that made people look as if they’d been in a cellar for a week. People yearned for the warmth of a candle. However, the lightbulb didn’t remain a gimmick. It became reliable, ubiquitous, and eventually so superior that lighting a house with candles was relegated to romantic Saturday night dinners. Currently, AI content is the flickering bulb, and the electricity behind it, the Large Language Models, is becoming more stable every day.

We have a long, storied history of buckling to convenience over authenticity.

Consider the tomato. We traded the bruised, misshapen, flavour-bursting heirloom for a perfectly spherical, chemically ripened red orb that tastes of wet cardboard. Individually, we recognize the inferiority; collectively, we’ve sanctioned this “tomato-slop” because it meets supply-chain requirements and looks good in a salad. Consider the open office. We surrendered the dignity of the private door for the forced collaboration and communal din of a warehouse setting. Though these floor plans are the antithesis of deep thinking and focused work, we’ve recalibrated our brains to adapt to the “interruption-slop” that’s now the workplace norm. Consider Auto-Tune. In the 1980s, the synthesizer was lambasted as the death of the hard-won note, the belief being that music should be a struggle, not a gift from a machine. Nevertheless, we’ve become so accustomed to the clinical gloss of digital correction that true, authentic human voices are now regarded as amateurism.

Speaking of ‘authenticity,’ you’ve likely noticed a fervent defence from publication editors and hiring managers, stipulating on mastheads and in job postings: “No AI-generated content.” Requesting that work submitted have a thumbprint of the maker is futile. It’s no secret that those who possess both the technical acumen to wield an LLM and the sophisticated eye of a seasoned editor are bypassing the human signature request with ease. With the right editing skills and having the acumen to know what resonates, using AI to produce work that transcends scrutiny is quite possible. AI-slop isn’t the presence of AI; it’s the failure to polish the output until it’s indiscernible from human craftsmanship.

In the near future, the skills employers will find worth paying for won’t belong to those who can simply prompt AI, but rather those who can turn its output into humanlike output.

The crux of the issue is that AI isn’t a static tool; it’s a mirror that learns to resemble the person using it. As we freely (keyword) engage with AI models, we aren’t merely “using software.” We’re knowingly training a digital apprentice in the intricate nuances of our own humanity, teaching the machine to bridge the gap between how we think and how we express.

Nvidia’s President and CEO Jensen Huang noted this shift during a fireside chat at the 2024 World Government Summit: “We’ve closed the gap between the way we think and the way we manifest our thoughts. We are now in an era where the computer is no longer just a tool, but a collaborator that understands intent.”

This “intent” is the fault line of current friction. Recent analyses by journalists like Taylor Lorenz in her latest Substack article How Much of Substack Is Actually AI? reveal an unsettling reality: a significant portion of best-selling newsletters on Substack now lean on LLMs to churn out high-volume, low-substance filler. Conversely, the data also suggests the “Capability Absorption Gap”—the distance between AI’s potential and our actual utilization—is narrowing. We’re beginning to quiet our anxieties and embrace the machine’s output. Today, nearly every professional email is nudged or drafted by “Smart Compose.” When Gmail anticipates our next thought, we don’t recoil and yell ‘Bot!’; we mindlessly hit the tab key. We’ve accepted a similar hyper-personalization in our newsfeeds; in our quest for convenience, we’re allowing algorithms to become the preeminent authorities over our subconscious.

Here lies the uncomfortable truth: if we loathe AI’s encroachment—if we truly despise its erosion of our social fabric and the steady atrophy of our critical thought—why do we refuse to stop clicking? Surely we know by now that every prompt we feed it serves as a vital data point. We’ve become the unpaid faculty of the world’s most expansive laboratory, training the machine to mimic our unique cadences and proprietary problem-solving skills. In essence, we’re the dutiful gardeners watering the very weeds we claim are choking our lawn.

It’s safe to say that in the not-too-distant future, AI will own us more than we own it, if it hasn’t already. We’ve become dependent on its speed to keep up with a world that no longer rewards the slow, methodical “human” pace. In 2023, Bill Gates stated, “AI will be like having a personal assistant. It will know your interests and your style and will provide content that is tailored specifically for you.”

Notice the language: “it will know.” The power dynamic is shifting. We aren’t just using a tool; we’re feeding a digital ecosystem that’s rapidly dictating the parameters of our professional and social lives. Our diving into AI is akin to swimming against a digital current; we might think we’re swimming, but we’re largely being swept downstream by the tide.

A bad post is a bad post, whether a human spent two hours on it or a machine created it in seconds. Technology isn’t the problem; the problem is that most human communication has become a performance of expertise rather than an exchange of value. We’ve been producing “human slop”—clickbait, rage-bait, and SEO drivel—for nearly two decades. AI is simply doing it faster and, increasingly, better.

Eventually, our brains will adjust. We’ll stop looking for the “AI-ness” and start looking for the value. If an article or video solves your problem or teaches you a skill, does it matter if the initial draft was generated by a machine learning algorithm? We’re rapidly moving toward a world where “AI-generated” in the hands of those who possess the skills I mentioned earlier is as invisible as a “factory-made” pair of jeans.

One day soon, you’ll find that the content you once dismissed as ‘slop’ is exactly what you’re looking for. It won’t feel artificial; it’ll just be the way things are. And honestly? Once the machine learns to perfectly replicate the specificity and nuance of a life lived, I think we’ll find its outputs quite palatable. We’ll realize that in our haste to build a better tool, we didn’t just automate the work; we automated “us.” By then, we’ll be too busy consuming the digital caviar served by AI to remember why we ever preferred the thumbprint of the maker.